Tuesday I attended an interesting seminar organized by Joyce Farrell at The Stanford Center for Image Systems Engineering (SCIEN). The presenter was

David Cardinal, professional photographer, technologist and tech journalist who talked about Photography: The Big Picture — Current innovations in cameras, sensors and optics.

To cut a long story short,

- DSLR systems will be replaced by mirror-less systems, which deliver almost the same image quality for the same cost but are an order of magnitude lighter

- point-and-shoot cameras will disappear, because there is no need to carry around a second gadget if it brings no value added over a smart phone (the user interface still needs some work)

- phone cameras will become even better with plenoptic array sensors etc., like the one by Pelican Imaging

An interesting factoid for those in the storage industry is that according to an IDC report cited by David Cardinal, in 2011, people took a total number of digital photographs requiring 7 ZB of storage (a zettabyte is 270 bytes). All those photographs could not end up on social networks, because even if for example with Flickr you get 1 TB of free storage, with the typical American broadband connection it would take 6 months to upload that terabyte of images.

David Cardinal mused that maybe people are no longer interested in photographs, but rather in photography. By that he means that people are just interested in the act of taking a photograph, not in sharing or viewing the resulting image. Therefore, he speculated the only time an image is viewed might be the preview flashed just after pressing the release button.

Is that so? According to Cisco's forecast, globally, Internet traffic will reach 12 GB per capita in 2017, up from 5 GB per capita in 2012. For photographers, these are not big numbers when one considers that a large portion of the Internet traffic consists of streamed movies and to a minor degree teleconferences (globally, total Internet video traffic (business and consumer, combined) will be 67% of all Internet traffic in 2017, up from 52% in 2012).

However, we might not remain stuck with the current miserable broadband service for long, and receive fiber services like the inhabitants of Seoul, Tokyo, Osaka, etc. do get. In fact, just a kilometer from here, Stanford's housing area is already on Google Fiber, and other places will soon be receiving Google Fiber, like Kansas City and others around it, Austin, and Provo (in Palo Alto we have fiber in the street, but it is dark and there is nothing in the underground pipe from the curb to my network interface). According to Cisco, globally, the average broadband speed will grow 3.5-fold from 2012 to 2017, from 11.3 Mbps to 39 Mbps (reality check: currently our 6 Mbps down and 1 Mbps up, $48/month, VDSL service delivers 5.76 Mbps down and 0.94 Mbps up; 1 km west, our residential neighbors at Stanford get 151.68 Mbps down and 92.79 Mbps up—free beta Google Fiber). In summary, there is reason to be optimistic.

Should Flickr plan to open a 20 ZB storage farm by 2017 in case people will be interested in photographs instead of photography? Probably not. The limit is not the technology but the humans on either end. We cannot enjoy 20 ZB of photographs. Just ask your grand-parents about the torture of having had to endure the slide-shows of their uncle's vacation.

The path to the answer to David Cardinal's question about photography vs. photographs is tortuous and arduous, at least it was for me.

In spring 1996, HP's storage division in Colorado started manufacturing CD-ROM drives for writable media. To create a market, the PC division decided to equip its new consumer PC line with the drives. The question was what could be the killer-app, and they went to HP Labs for help, because Quicken files would never justify an entire CD-ROM.

At that time my assignment was to work on a web site to showcase a new image format called FlashPix (see image above, click on it to see it at full resolution; a GIF version of the demo is still here). The folder Web Album at the bottom center of the desktop contained the demo I gave the CD-ROM people from Colorado.

In February 1991 I had a dinner with Canon's president Dr. Yamaji, where we strategized over the transition from AgX photography to digital. At that time, Canon had the $800 Q-PIC for consumers (really a camcorder for still video images), and a $28,000 professional DSLR. By considering the product release charts of both Canon and its suppliers, we figured that it would be 2001 until digital could replace AgX in both quality and price. For the time in-between we decided to promote Kodak's Photo-CD solution as a hybrid analog-to-digital bridge.

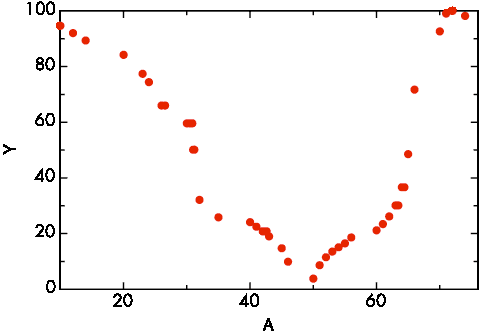

The people at Kodak told us the average American family keeps their images in a shoe box, with the average number of photographs being 10,000. By looking at the evolution curve of disk drives, which at the time was twice as steep than Moore's law for micro-processors, we figured that the digital family would accumulate an order of magnitude more photographs, namely hundreds of thousands, because the effort and cost per photograph would be so much lower in digital.

As in 1996 I was assembling collections of a few thousand photographs for the FlashPix project, it quickly became clear that images on a disk are much more cumbersome to browse and organize than prints in a shoe box. I tried several commercial asset management programs, but they were too slow on my low-end PC.

I ended up implementing a MySQL database and maintain a number of properties for each image, like keywords, rendering intent prediction, sharpening, special effects, complexity, size, copyrights, etc. Unfortunately it turned out it was very tedious to annotate the images, and it was also very difficult.

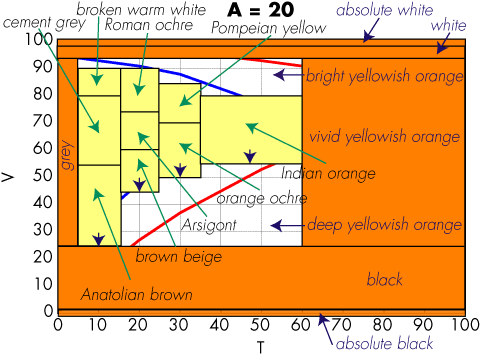

Indexing entails categorization, which is a difficult cognitive task, requiring a high degree of specialization. What makes this worse is that the categorization changes in time as iconography evolves. Categorization has a scaling problem: a typical consumer album in 1996 required more than 500 keywords, which are hard to manage. Hierarchical keywords are too difficult for untrained people and taxonomies (e.g., decimal classification system) are too bulky. We proposed a metaphor based on heaping the images in baskets on a desktop:

The labels on the baskets were sets of properties we called tickets, represented as icons that could be dragged and dropped on the baskets. The task was now manageable, but nothing that could be used by consumers.

In a family there typically was a so-called photo-chronicler who would go through the shoe box and compile a photo-album. Photographs by themselves are just information: to become valuable they have to tell a story in the form of a photo-album.

The applet on the right side was running in a browser and would interface to the content on the MySQL database running on the public HP-UX server under my desk. The idea was to reduce the work by allowing any family member who would have time to fill in some of the data: we replaced the model of the mother photo-chronicler with a collaborative effort involving the whole family, especially the grand-parents, who typically have more time.

Collaborative annotation introduces a new problem, namely that each individual has their own categorization system. As described in Appendix II of HPL-96-99, there is a mathematical theory that provides a solution to this problem. An A-construct in mathematics is very similar to an ontology in computer science. The solution is to create ontology fragments for each contributor and then map them into each other leveraging the general structure theory.

Unexpectedly I received a Grassroots Grant, which allowed me to hire a student to rig up an interface to Stanford's Ontolingua system. As a difficult example we took photographs from a mixed-race and mixed-religion wedding (a small sample of the images is here).

We wrote up a conference paper (a free preprint is available here) in a couple of nights, because that was it. A senior manager had determined that nobody would ever have more than 25 digital photographs. The manager insisted that when people would take new pictures, they would delete old pictures so that only the best 25 photographs survive. That was it and the project was killed.

In a way, David Cardinal's photography would be an extreme form of this assertion, where only the last photograph survives, and that only for an instant.

In my view, people are too fond of their memories to just toss them. The future will not be a pile of 20 ZB in pixels. Value is only where a story can be told, and our job is that of creating the tools making storytelling easy.

In retrospect, my mistake in 1996 was to do the annotation as a separate workflow step to be performed after triage. Today the better approach would be to use voice input to annotate the photographs while they are been taken. Today, the metadata is just the GPS coordinates, the time, and the other EXIF metadata. How about having Siri asking the photographer "I see five people in the image you just took, who are they?" "Is this still a picture of the wedding?" etc. Easier and more accurate than doing face recognition post factum. Last but not least, we have the image processing algorithms to let Siri exclaim "Are sure you want me to upload this photograph, I think it is lousy!"

I am game for uploading my share of 4 GB in photographic stories when 2017 comes around!